Accelerating Multi-Scalar Multiplication for Efficient Zero Knowledge Proofs with Multi-GPU Systems

Abstract

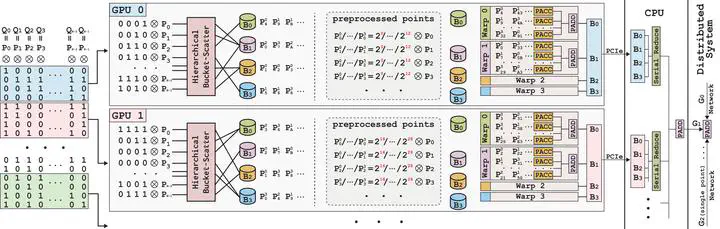

Zero-knowledge proof is a cryptographic primitive that allows for the validation of statements without disclosing any sensitive information, foundational in applications like verifiable outsourcing and digital currency. However, the extensive proof generation time limits its widespread adoption. Even with GPU acceleration, proof generation can still take minutes, with Multi-Scalar Multiplication (MSM) accounting for about 78.2% of the workload. To address this, we present DistMSM, a novel MSM algorithm tailored for distributed multi-GPU systems. At the algorithmic level, DistMSM adapts Pippenger’s algorithm for multi-GPU setups, effectively identifying and addressing bottlenecks that emerge during scaling. At the GPU kernel level, DistMSM introduces an elliptic curve arithmetic kernel tailored for contemporary GPU architectures. It optimizes register pressure with two innovative techniques and leverages tensor cores for specific big integer multiplications. Compared to state-of-the-art MSM implementations, DistMSM offers an average 6.39× speedup across various elliptic curves and GPU counts. An MSM task that previously took seconds on a single GPU can now be completed in mere tens of milliseconds. It showcases the substantial potential and efficiency of distributed multi-GPU systems in ZKP acceleration.